CUDA

Introduction

CUDA is an extension to the C and C++ programming languages plus a runtime environment for managing GPU-based parallel computing. CUDA is developed by Nvidia (https://developer.nvidia.com/cuda-zone) and is currently the most popular hardware-accelerated parallel computing solution in computer vision and deep learning. CUDA programs run on Nvidia graphics cards (sold to computer gaming enthusiasts) or on Nvidia cards dedicated to parallel computing.

Installing on Ubuntu

Installing Nvidia drivers with ubuntu-drivers

First, install the ubuntu-drivers utility:

sudo apt install ubuntu-drivers-common

Check if ubuntu-drivers reports your Nvidia card:

ubuntu-drivers devices

Then install the drivers for the card

sudo ubuntu-drivers autoinstall

and reboot.

Installing Nvidia drivers manually

First, check if the installed Nvidia driver meets the requirement for the CUDA toolkit version you want to install. For example, CUDA Toolkit 9.x requires the driver version 390. To install, type

sudo apt install nvidia-390 nvidia-settings

followed by a restart of your computer. If necessary, you can reconfigure the kernel module with this new driver by typing

sudo dpkg-reconfigure nvidia-390

Ubuntu 16.04

The Debian package for CUDA development from the Ubuntu/Launchpad repositories is nvidia-cuda-dev. If the version of CUDA included in this package is too old for your graphics card (cards with Pascal architecture require CUDA 8.0) then you can download the CUDA Debian package for the latest version from NVIDIA's CUDA download page. Install with these terminal commands:

sudo dpkg -i cuda-repo-ubuntu1604-xxxxxxxxxx.deb sudo apt-get update sudo apt-get install cuda

The reason for running apt-get update is that the Debian package does not install CUDA directly. If you downloaded the local version, it will copy the contained packages into the folder /var/cuda-repo-xxx-local and register the folder as a third-party repository (/etc/apt/sources.list.d/cuda-xxx-local.list).

After updating to a new version of CUDA, don't forget to rebuild all libraries and applications that use and link against CUDA. For example, PyTorch and OpenCV.

Ubuntu 18.04

At the moment there is no officially supported CUDA development kit for Ubuntu 18.04. You can follow these instructions that I fished out from askubuntu.com, however, to install CUDA 9.1 from the multiverse repository:

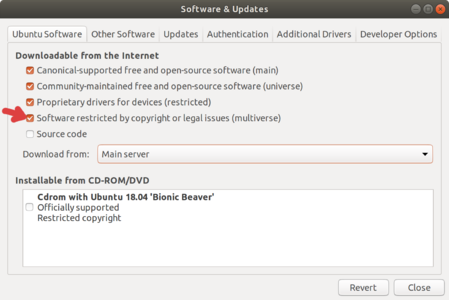

First, enable the multiverse repository

Then install Cuda

sudo apt install nvidia-cuda-toolkit

which will also install GCC 6 alongside Ubuntu's default compiler version GCC 7.3.

Because you need to use GCC 6 when building a program that uses Cuda 9.1, you should be able to easily switch between GCC versions 7 and 6. Read Switching between GCC versions in Ubuntu to find out how to do this.

Execution Model

- Blocks can contain (up to 1024) threads.

- Assigning blocks to the available stream multiprocessors (SM) is scheduled by the GPU.

- Synchronization points (barriers) at which all threads wait until the last thread is finished.

- Atomic operations for synchronizing memory access across threads.

Memory Model

- Thread-local stack memory. Fastest to access.

- Block-local memory, shared by the threads in the same block, with fast access speed.

- Global memory that is shared by ALL threads. Slow access speed, unless cached.

Best Practices

- Coalesced memory access, access to contiguous memory locations

- Prevent code paths from diverging (due to for loops or 'if' statements) so that threads run similarly long and the compute units are better utilized.

Building CUDA Files In Your C/C++ Project

- Add a build phase in which the CUDA source files are compiled by the CUDA compiler nvcc.

- nvcc generates the binary code that will be loaded into the GPU.

- nvcc also generates the C/C++ code for the host side (CPU) by replacing CUDA syntax with CUDA runtime calls.

- Use the nvcc option --compile or -c for calling the host compiler (gcc or clang) to compile the generated C/C++ code down to machine code.

- Link against the CUDA runtime library libcudart.

External Resources

- CUDA official documentation home page

- NVCC

- Online Course Intro To Parallel Programming, Udacity

- PyCUDA Python binding